First of all, let me say that I like theory. I like convoluted and complex language when it represents careful and complex argumentation and analysis. I actually enjoy reading Deleuze, I think philosophy is fun, and I will almost never dismiss complicated text as mumbo-jumbo. Today is an exception, and Voice or chatter? is an apt title for the recent research report form MAVC.

This research report explores the “conditions in democratic governance that make information and communication technology (ICT)-mediated citizen engagement transformative,” on the basis of eight country cases. Ultimately, however, it fails to deliver meaningful insights. The bulk of text engages with broad theoretical frameworks and a long list of findings, both of which are convoluted, and largely detached from the empirical cases., Though the cases receive only meager attention ( 8 of 68 pages), their description is the most compelling portion of the report. All in all, this document is an difficult and unrewarding read. But it’s weight and ambition make it seem important.

I read this report so that you don’t have to. Below is a quick summary.

In brief, this report was oddly structured, resulting in a difficult, disjointed read. The findings were generally presented as non-controversial abstractions, largely unrelated to the empirical cases. Nonetheless, below I list all 24 of them with an explanation and comments for your skimming pleasure. Neither theory nor methods were clearly applied in this research, through a complex theoretical framework was described at length. The case studies were by far the most interesting and compelling portion of the report, despite profound brevity.

For detailed review, feel free from to skip to the Structure, Findings, the Theory and Methods, the Cases, and my conclusion.

The Structure

Let’s get it out of the way. This report is a long, hot mess. The introduction is rambling, the literature review random, the methods description speaks almost exclusively to case selection (it’s 39 words on within-case methods describes interviews, but only in so far as to mention that “selection of key informants was a case-specific exercise”). The structure is also challenging, without clear through lines from theory, to cases, to findings, and to conclusions, or really between any two of those components. This is frustrating, because a failure to apply structuration theory in either case study analysis or findings means that there is no reward for having read about it.

Poor structure also impedes basic knowledge capture. Descriptions of the cases are, for example, split into a series of sequential descriptions. Each country is described in terms of quality of governance, then each is described in terms of e-participation and then in terms of infrastructure, before you even get to the descriptions of the cases. So if you want to understand what’s happening in Colombia you have to flip between four sections, before looking for how Colombia is referenced in each of the 24 findings. As such, there might be a lot in this report I didn’t get, despite having read it three times.

For detailed review, feel free from to skip to the Structure, Findings, the Theory and Methods, the Cases, and my conclusion.

The Findings

Chatter’s findings come at the end of the report, but are ostensibly the most important, so I’ll present them first. There are a whopping 24 findings in total, each with a short explanation and together comprising the bulk of the report (24 of 68 pages). Generally, the findings either present obvious truisms, or provocative claims with little empirical consequence. There is generally only very superficial reference to the empirical cases, and no argument or method indicating that the findings draw directly from the cases. When cases do provide interesting examples, the lack of analysis and lack of description preclude any real insight, and I’m left wishing that this report chose to focus on one or two of the findings.

I’ll list the findings below for skimming, together with an explanation when necessary, and a short comment.

- The digital moment is marked by normative flux.

Explanation: Norms around participation are changing.

Comment: Norms are always changing, it’s not clear whether the authors believe this is distinct to the introduction of digital technology or the development of participatory processes. - Digital mediation frameworks are shaped by, and in turn determine, agentification. Explanation: The use of technology introduces new actors and new roles, which then shape the way that tech is used.

- Agentification in the digital space reflects norm flux and can present a normative crisis

Explanation: When private sector actors take over public sector roles, this limits the potential to improve governance.

Comment: This is only represented by the cases to a limited degree, and described alternatively as a positive contribution towards meaningful participation in the case descriptions, and a negative development in this finding. There is no reflection on how or when this happens. - New norms on openness and transparency are used variously by different political regimes

Comment: Yes, norms are abstract and instrumental, hence concerns about open washing. See 50 shades of open, Francoli’s assessment on how open gets interpreted differently in OGP national action plans, or Carla Winston’s work on norm clusters. - Deliberation as a norm is expanded or restricted based on the technological choices made by the state

This is an obvious truism: tech choices impact projects and communication. It might also be the most useful inquiry this kind of report could pursue. There were specific aspects of this in each of the case studies, and tracing these kinds of decisions and consequences across these cases in a systematic way might yield greater insights than the platitude above. It would also require more than the 200 words of analysis it was allotted here. It could be a report of it’s own-. - With the transition to the digital, datafied decision-making becomes a norm

Clearly, in some situations, the flashy newness of online engagement and participation can eclipse offline expression of citizen voice. It would be useful to see an analysis of how and when this happened in the case studies. - In digital democracy, institutional commitment is key to the right to be heard

I’m not sure what they mean by this. - Citizen participation frameworks valorise enterprise, expertise and cooperation

The report presents this finding as consistent across cases and notes that “even within [cases] which allow for agenda-setting and claims-making possibilities, there is a separation between pedestrian (‘like-and- click’) and more sophisticated (working-the-platform) zones of participation.” This deserves closer attention, I would like to know how it is influenced by contextual factors like the strength of civil society and the degree of inequality in countries. - Transnational codes and configurations influence national visions of citizen engagement

Explanation: International norms matter.

Comment: Well yes, but we don’t know much about how or why. Though the report lists cases where that seems to have happened, these descriptions are simple and non-critical. - Digital affordances redefine the scale and shape of citizen participation

Explanation:Tech allows people to do new things, which changes relationships.

Comment: Yes, clearly, but this analysis mentions only three examples of new coalitions, and offers no insights. - Neoliberal e-governance reduces the political ideal of participation to administrative problem-solving

I’m not sure what this means. The following text does not support that title with a cogent argument. - Participation is envisioned as voluntarism for public value

Explanation: A voluntarism framing is bad because it obscures and reinforces biases in representation.

Comment: This framing seems consistent across all cases selected for this report (which in itself poses a challenge to generalized claims), though there are only two clear examples of exclusion described. If there are more, or any consistency in how this happens across cases, it should be described. - Techno-authentication is a precondition for legitimate participation

Explanation: Requiring digital identity can exclude people.

Comment: Of course it can. This finding should describe specific examples of that happening and how it happened. - Techno-authentication presents new contradictions and challenges to citizen rights

Explanation: Lack of anonymity can be problematic in participatory mechanisms, especially when formal protections for privacy and data are lacking.

Comment: Yes, but how is this grounded in the cases. Only one is listed (Spain), and as an exception to this generality. Are there examples of how this problem was manifest? - As older repertoires of citizen action are invalidated, marginal citizens experience a crisis of knowledgeability

Comment: this makes no reference to the cases at all. - Techno-design can signify values of democracy, but to codify democracy takes strong institutional measures

Explanation: E-participation is not the same as democracy?

Comment: Duh. For a more thoughtful rant at the same level of abstraction, see Grönlund, for a practical example, spend more than 200 words on literally any example. - In a datafied state, outcomes for voice are contingent on governance of code

I don’t know what they mean by this. - The quality of participation is directly contingent on levels of trust between state and citizen

Explanation: Trust matters.

Comment: Of course it does, and the cases included in this report are usefully varied in exemplifying how it matters. An exploration of this relationship would be useful. That could be done productively with these case studies or with only one of them, but would require systematic analysis. Simply stated the obvious does not add value. - ICT-mediated citizenship is a work in progress to balance direct and representative democracy

Explanation: E-participation represents a move away from representative, towards direct democracy

Comment: This claim is dramatically unsupported by any evidence or analysis in this report. Even if it were, it would have no direct empirical implications. - The social practices of technology can tip into political action

Explanation: I’m not sure, the analysis seems to argue that online filter bubbles damage the quality of participation? - Through democratic practices of technology, citizens ‘hack’ politics

Explanation: Young people are increasingly attracted to digital politics?

Comment: I’m not quite sure what’s intended here, this finding isn’t supported by evidence or analysis. - Citizens hone new political consciousness through new knowledgeability

I have no idea. - Differential access means differential participation

Explanation: Inequalities of access and representation influence digital participation

Comment: The site superficially references inequalities of access five of the case studies. Description and exploration might make this insight more meaningful.

For detailed review, feel free from to skip to the Structure, Findings, the Theory and Methods, the Cases, and my conclusion.

The Theory and Methods

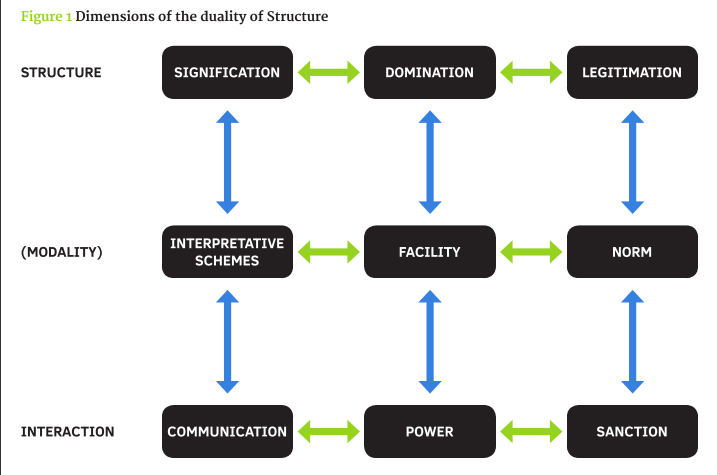

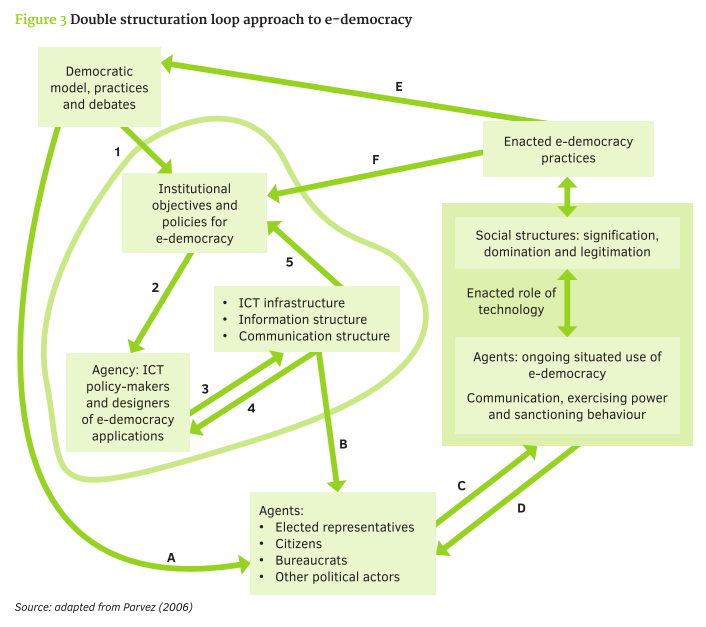

This report’s research “framework” is more generally a vague gesture to structuration theory, Structuration theory deserves a lot more careful explanation than it gets here, and the author’s casual application of the theory results in a lot of text with little insight. Occasionally there’s a diagram, which emphasizes how provocative structuration theory can be at the level of generality, and how little it has to offer empirical analysis.

Structuration theory might have something to offer in understanding individual cases. Unfortunately, however, the emphasis of this report is on comparative analysis, and there isn’t enough detail or method to give this theory traction. This makes for a lot of sludging through theoretical text that never pays off, it also produces weirdly complicated conclusions.

Make sense of the graph below, I dare you. It’s like the analysis is angry at the mapping innovation of systems theory, and wants to prove that it can’t be told what to do.

For detailed review, feel free from to skip to the Structure, Findings, the Theory and Methods, the Cases, and my conclusion.

The Cases

This is by far the most meaningful part of the report, and the eight pages describing initiatives are worth reading. Here’s a summary version, to highlight those of interest.

- Participatory Hazard Mapping (South Africa)

NGO used public wifi in Warwick Market for traders to document their working environment. This led to committees, multi-platform reporting (Frontline SMS, Ushahidi) and a visual mapping. Regular contact with government facilitated adapted training for public service providers and for consultations. - General Grievance Portal (India)

Statewide portal for citizen grievances on any aspect of governance, initiated by government. Includes a dashboard where complainers can track complaints and send reminders, also rate the quality of response.Multi-platform, with backend capacity to aggregate and track complaints and responses across agencies. This has been complemented by numerous in-person hearings and institutional coordination mechanisms in government. The prominent advocacy NGO MKSS was able to hack the platform for collective complaints. Not all parties are satisfied with the quality of response. - Open Data Portal (the Philippines)

World Bank and government initiative led to the Open Data Task Force and the national OD portal. The portal contains a significant amount of data, not all of which is machine readable, limiting its usefulness. The Task Force has also organized consultations and hackathons, with mixed results. The report suggests that the lack of a FOI legislation is partly to blame for a lack of transformative impact. - Open Policy Proposals (the Netherlands)

Citizens are permitted to submit proposals for legal and policy frameworks, and they are guaranteed debate if they achieve 40,000 citizen endorsements. Though discussed on a variety of media platforms, there is no centralized web site for the initiative, and it has been criticized for soliciting shallow input rather than dialogue. - Deliberative Platform (Spain)

Decidem Barcelona is an implementation of the open source CONSUL platform, that allows anyone to propose and comment on city council policy. The city council allocated staff to support the initiative, ran media campaigns, and set up kiosks around town to train users. Some issue-based coalitions have developed around deliberations on the platform. - Participatory policy-making (Brazil)

An open blog platform was used to include the public in drafting an internet bill of rights in two stages, first accepting proposals for content, then allowing comments on a draft bill. Brazil also held an online consultation for copyright law, which featured some UX features and tracked users’ IP addresses in order to flag instances of interest group spamming. Offline inputs were not represented on the platform. The report suggests that the outcomes of both processes did not significantly influence policy, due to lobbying by interest groups. - E-Participation (Colombia)

A policy and platform were launched in 2011, including “Q&A exercises where government departments address citizens’ information queries,” citizen complaint processes to specific institutions, and “e-discussions on public policy matters.” Trust and use of the platform is limited by poor governance of the platform and low trust in government generally. - OGP Action Plan (Uruguay)

The development of OGP action plans have become more inclusive over time, and social media are now used to coordinate activities, share information about activities, and to solicit public comments on specific content. The second plan also led to a civil society partnership and production of a health app. The report notes that public participation in online consultations have been low, but suggests that “the multi-stakeholder mechanism put in place for [this coordination] has led to the rise of a new type of civil society organisation” (tech savvy and pragmatic).

There isn’t a lot more detail than this in the actual report. So if any of these spark your interest, you’ll want to google additional information. But despite this brevity and a number of provocative and unsupported claims, it makes for an inspiring read.

For detailed review, feel free from to skip to the Structure, Findings, the Theory and Methods, the Cases, and my conclusion.

Conclusion:

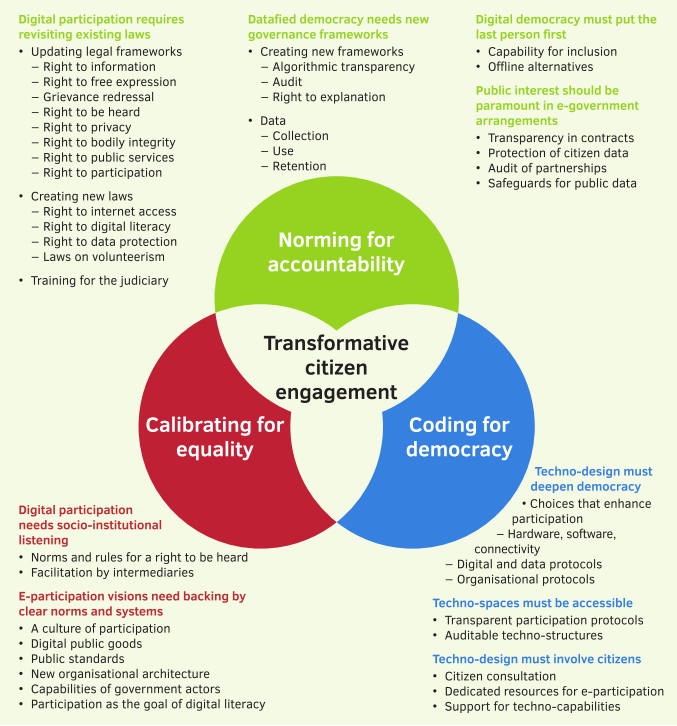

This report concludes with four abstract recommendations (1. Digital participation requires revisiting existing laws, 2. Datafied democracy needs new governance frameworks, 3. Digital democracy must put the last person first, 4. Public interest should be paramount in e-government arrangements). I won’t go into them, they are as non-contentious as they are impossible to operationalize in any immediate sense. The below Venn diagram gives a better overview of the essence than does the text.

Reading this report was a lot of work. @participatory called it good reading, but he’s exceptionally interested and uniquely well informed. For those of us not as well positioned, I don’t expect many will get through it.

It was probably also a lot of work to produce, and I can’t help wondering how much it cost (or if MAVC paid per finding). All and all, it’s a shame if something that demands so much effort doesn’t produce something more useful. When I decided to blog this report, I was really hoping to uncover the hidden ways in which it did. But I simply didn’t find them. There’s a short list of interesting initiatives, a long list of uninteresting findings, and a case study in how not to use theory. We often hear complaints that nobody bothers to read pdf reports. This is part of the reason why.