@allvoicescount is having their final learning event this week, and starting to draw conclusions from some of their research outputs. This seems like a good time to be consistently be reminding ourselves about all the complementary research on the same issues. There’s a tremendous amount of research being produced behind the ivory curtain. Presumably, we don’t have time to read it, or access past the paywalls. But the civic tech community does itself a disservice to talk broadly about learning and evidence, without acknowledging that we deal primarily with the tips of evidence iceburgs, and without frankly discussing how different formal research and its outputs are form what gets produced inside our conference circles.

In that spirit, here’s some takeaways from a recent scientometric analysis of scholarly literature on government transparency. It covered 10yrs of scholarly research (24,401 articles across 69 journals).

The article is worth reading in its entirety, but for those pressed for time, here are the findings I found most and least surprising about academic research on government transparency.

1. Transparency receives little attention compared to other isues (just 0.75% of all articles included in the sample.

Some journals clearly dominate. For example Government Information Quarterly accounted for 53.66% of all articles published in the study’s “information and library science” grouping. Overall, these were 5 journals accounting for the most transparency focused articles: Government Information Quarterly (13.4%), International Review of Administrative Sciences (8.5%), Public Choice (4.9%), Local Government Studies (3.7%) and Online Information Review (3.7%).

2. A focus on voluntary transparency dominates, and A2I dominates studies on mandatory transparency.

3. Methods are changing, and quant is gaining steam. Check out this graph.

Though the authors note that “throughout our study period there is a clear preference for the use of quantitative tools to analyse voluntary transparency.”

Though the authors note that “throughout our study period there is a clear preference for the use of quantitative tools to analyse voluntary transparency.”

4. Perhaps most (un?) surprising, non-empirical methods dominate the study of mandatory transparency mechanisms, while empirical methods dominate the study of voluntary transparency. When it’s mandated it’s too late, so who cares if it works?

5. The qualitative tool that is most frequently employed is that of the case study (8.5%), especially with respect to the proactive voluntary disclosure of financial information (44%).

6. Finally, the authors identified a number of niche areas that have emerged in transparency research, and note avenues for further research. Some of these, like research on “stakeholders’ perceptions and expectations of transparency and the question of the reliability of transparency” will resonate well with the civic tech community.

This study is likely confirms many suspicions, and we in the civic tech community are always happy to reference or subtweet evidence that confirms our position. The question is, though, whether we can make actual use of the tremendous amount of evidence already out there. Because we’re not doing it now.

Check out the bibliography of papers the authors included in their close analysis. These do not get cited or referenced in arguments about civic tech evidence. And this body of evidence on government transparency is just one of many iceburgs about whose tips we quibble.

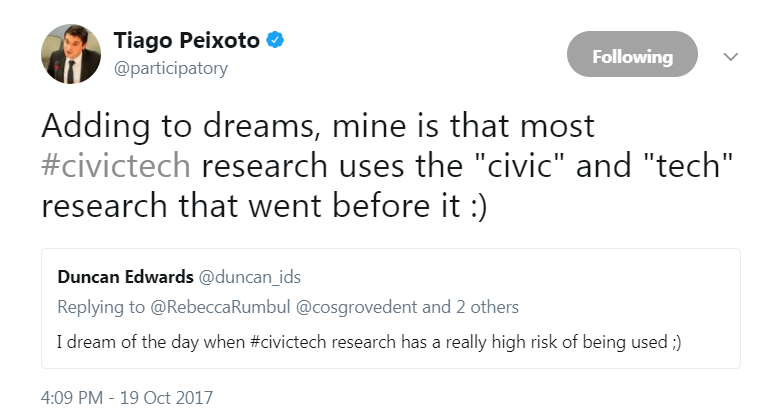

As global networks gather this week to discuss what they’ve learned over several years of civic tech research, there should be a deliberate effort to think beyond our communities and funding circles, to build on w

hat’s been learned more generally.